So, you’ve got Security Onion (SO) running from the Security-Appliance-in-a-Box via Ansible. Now what? How do you begin to ingest logs from your other devices into the included Elastic instance? I’m glad you asked! There’s a couple steps you’ll need to follow.

Allow Access

First you’re going to need to open the firewall to allow incoming TCP traffic to port 9200. You can limit it by host/subnet, or just open it to all using the below.

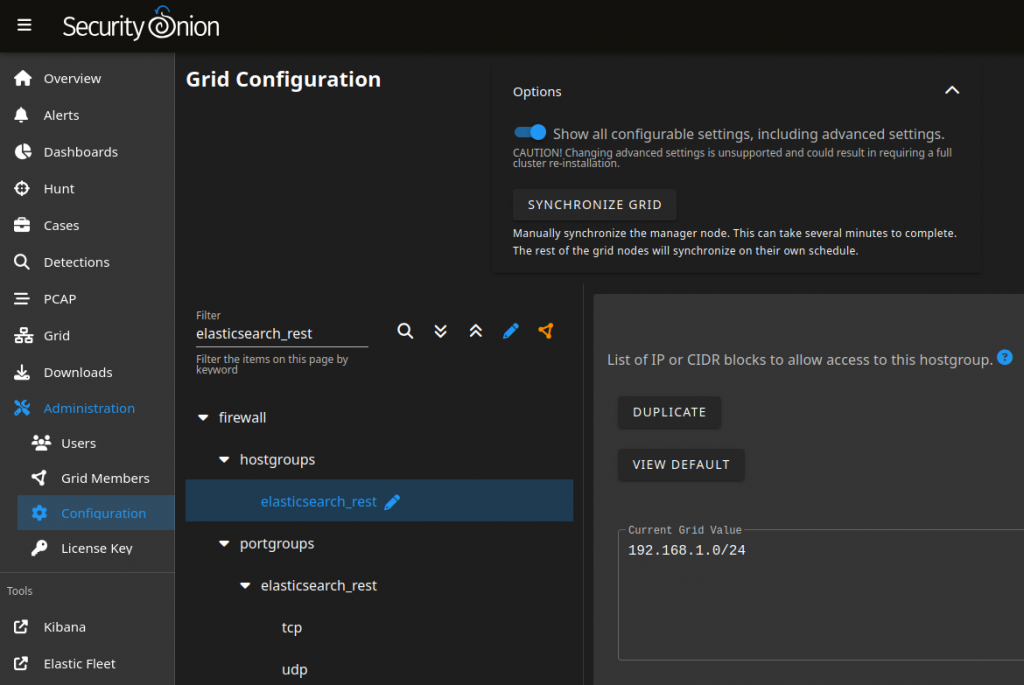

For the 2.4 version, you can do this via the Administration > Configuration, but need to enable the advanced settings.

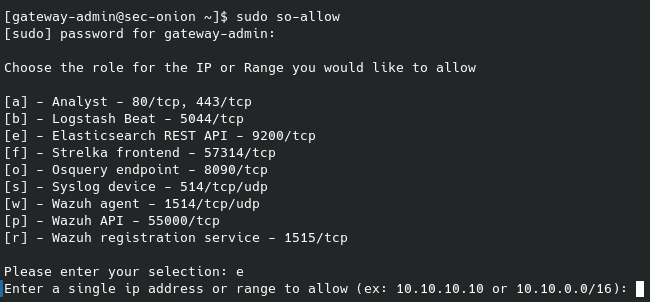

On the legacy version you do this as shown below.

sudo firewall-cmd --permanent --add-port=9200/tcpOn legacy versions, you’ll also need to authorize the subnet/host with SO (using the so-allow command) to use the Elasticsearch REST API.

Configure Elasticsearch

There’s a couple things that need to be done to allow ingestion, as well as viewing your data. The first is to create a role for publishing events. I’ve granted it the below privileges, which include the ability to create indexes. This is the minimum set of requirements that I’ve found, removal of any will prevent the ability to send logs.

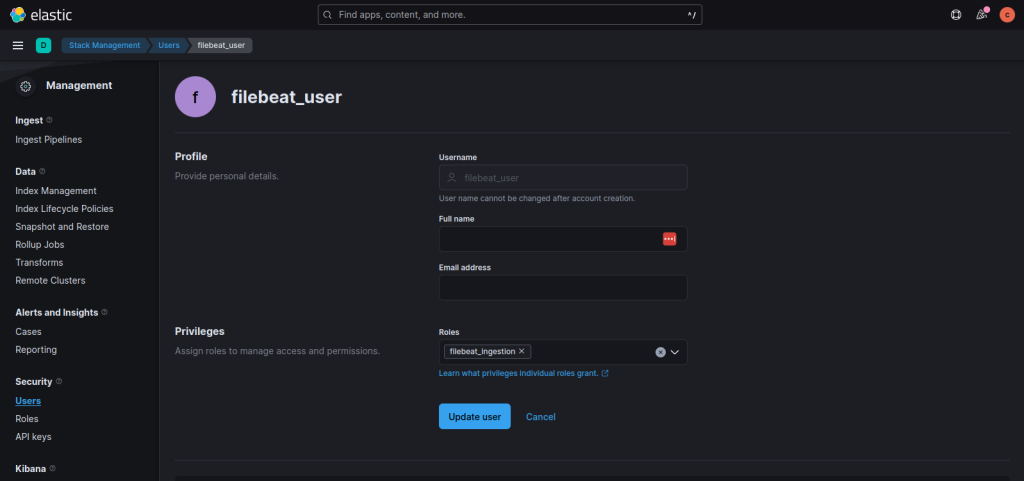

Next, you’ll need to create a user that your agents will use.

Data Views and Indices

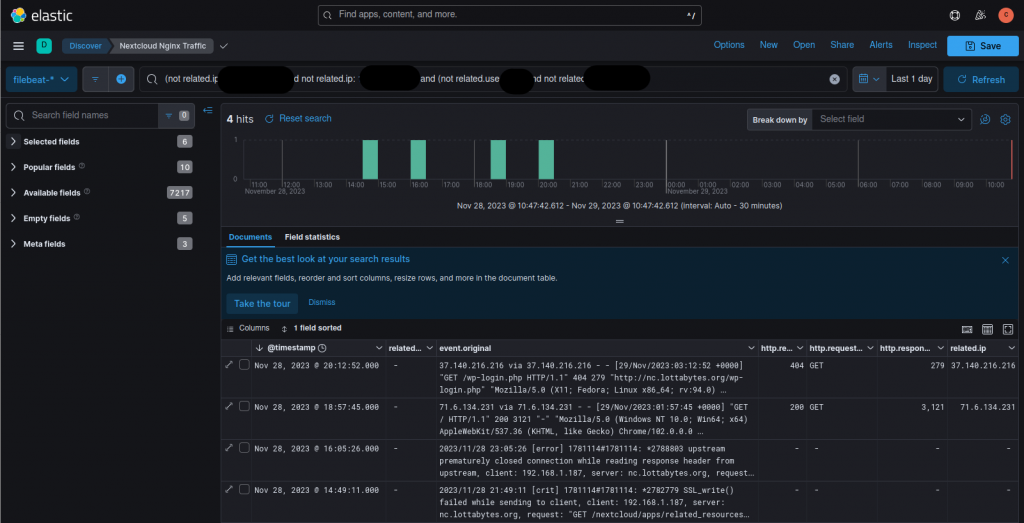

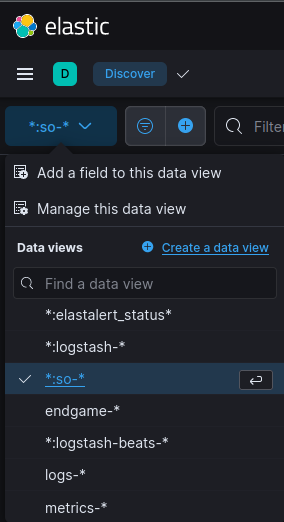

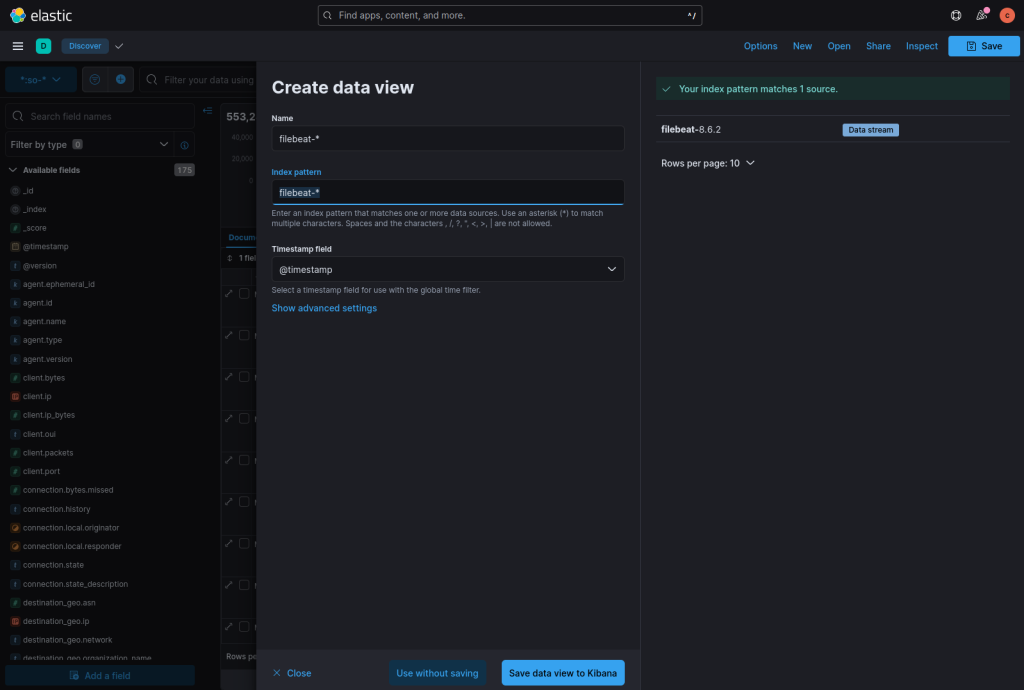

By default, you won’t see filebeat as a dataview, which isn’t useful as you can’t see your incoming logs. To remedy that situation, click on the Create a data view button.

Using filebeat-* as both the Name and Index Pattern, you should see that you have a source already mapped. If you don’t see that, it’s probably because your agents aren’t sending logs yet.

Agent Configuration

There’s already good documentation around installing the Filebeat Agent, so I won’t cover that except to note that this docs page is important for SSL settings as HTTPS is the default. After that, I do want to call out that you need to go into the appropriate module (e.g., nginx) configuration, otherwise you won’t see logs, even after enabling the module.

Example: I want to collect nginx logs.

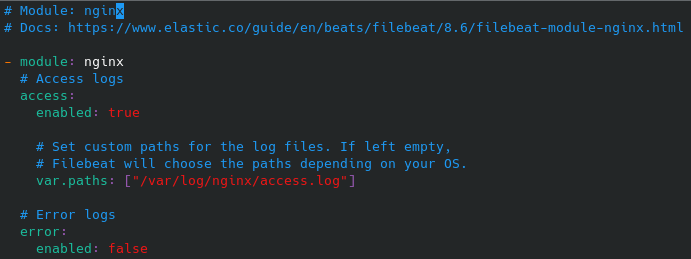

Step 1: Update the nginx module configuration, located at /etc/filebeat/modules.d/nginx.yml. Mark sections (e.g., access vs. error) as enabled. Provide path details to the files as required.

Finally, enable the module:

sudo filebeat modules enable nginxThere you have it. Now your SO appliance is beginning to ingest log data from your remote hosts.